If you’ve ever been in a meeting where someone casually said “we just need to add RAG,” and you nodded while secretly searching what that meant under the table, this post is for you.

The AI space moves so fast that even experienced developers can suddenly feel surrounded by unfamiliar buzzwords overnight. Terms like temperature, tokens, context window, embeddings, fine-tuning, and RAG get thrown around constantly, often without much explanation.

This post is meant to build that foundation. I’ll walk through the core concepts and definitions you need to understand modern AI development. Don’t just skim the terms — try to understand the idea behind each one. Treat this post as a reference you can revisit from time to time, so the next time your company or team discusses a new AI strategy, you’ll understand what’s actually being talked about.

You Are Not Behind. But You Do Need to Learn Some New Words.

I have been writing code for a long time. Long enough to remember when JSON format was the future, when microservices were going to solve everything, when containers were the thing everyone had to learn or get left behind. Every few years something arrives that feels like it will make what you know irrelevant. Usually it does not. You adapt, you ship, you move on.

AI feels different. And I think the reason it feels different is not the hype, working in the software industry means we are used to hype. It is that the concepts underneath it do not map cleanly onto anything we already know. A new framework is still a framework. A new cloud service is still a server you do not have to manage. But when someone starts talking about context windows, temperature, embeddings, or hallucination, there is no obvious drawer to put those ideas in. They feel foreign in a way that LINQ or async/await never did.

That discomfort is real, and it is worth sitting with for a moment rather than rushing past it. Because the developers who are going to struggle are not the ones who feel that discomfort. They are the ones who dismiss it, who decide that AI engineering is just calling an API and that they already know how to do that.

The bad news is it is not just calling an API. The good news is the gap between what you know and what you need to know is much smaller than it probably feels right now.

This post is my attempt to name that gap clearly. Not to fill it completely – that takes time and building things – but to provide a map of what is actually new, why it matters, and what kind of developer survives this shift.

The part that is genuinely new

When I first started experimenting with language models I kept trying to find the .NET equivalent of each new concept. What is the context window in terms I already understand? What is hallucination, really? That instinct makes sense, it is how experienced developers learn anything new, but with AI it only gets you so far. Some of these concepts have no good analogy. That is not a reason to panic. It is just a reason to build new mental models rather than borrowing old ones.

Here are the ones that actually matter for a .NET developer trying to understand what AI systems are doing.

Context windows

Forget the word “memory.” A context window is a whiteboard with a maximum size. Everything the model sees in a single interaction – your instructions, the conversation history, any documents you fed it, the user’s question – has to fit on that whiteboard at once. When it does not fit, the oldest content gets erased. Not degraded, not summarized automatically. Erased. And the model will not tell you this happened. It will just answer as if that content never existed. If a user told your chatbot early in a conversation that they are running version 2.1 of your product, and that message got pushed off the whiteboard by the time they ask their tenth question, the model will make up an answer based on whatever version it feels like assuming. No exception. No log entry. Just a quietly wrong answer.

You will bump into this as a regular user too – paste a long document into ChatGPT and watch it forget the beginning of the conversation. When you start building systems, this becomes a design decision you have to make deliberately: what gets kept, what gets dropped, and in what order.

Tokens

A token is roughly four characters of English text, but that is a very rough approximation. Code tokenizes differently. English language tokenizes differently. What matters practically is that every API call is priced and limited in tokens, not characters or words. When you are estimating whether your prompt will fit in a context window, or how much an API call will cost, you are thinking in tokens. Every model provider has a tokenizer tool. Use it before you assume anything. The best example is OpenAI’s Tiktokenizer – you paste any text in and it shows you exactly how the model breaks it into tokens, color coded, with a count. Paste a C# method in there and you will immediately see that code is token-expensive compared to plain English.

For practical purposes when building in .NET, the library to know is SharpToken on NuGet – it wraps OpenAI’s tokenizer and lets you count tokens in code before making a call.

Tokens are the basic units that models work with. A token is not always a full word. It can be a word, part of a word, a number, or a punctuation mark. As a rough guide:

- 1 token is about 4 characters

- 1 token is about 0.75 words

- A 500-word document is 670 tokens

Both input tokens (your prompt) and output tokens (the model’s response) count toward billing. Each model has a maximum token limit – the context window we described above. If your input plus output exceeds this limit, the model will cut off or refuse the request.

Understanding tokens matters because they directly affect cost and what you can fit into a single request. Longer prompts cost more, and very long documents may need to be split into chunks before processing.

Temperature

This is the setting that controls how much randomness the model injects into its responses. Low temperature means the model tends to pick the most probable next word at each step, which gives you more predictable output. Higher temperature means it explores more of the probability space, which gives you more varied and sometimes more creative output. The reason this matters is that language models are not deterministic by default. Run the same prompt twice and you may get two different responses. That single fact has enormous implications for how you test, how you debug, and how you define what “correct” even means. We will come back to that.

Hallucination

This word sounds like something went wrong that you can fix. It did not, and you cannot. A language model generates text by predicting what word is most likely to come next given everything before it. It is extremely good at this. So good that it will produce a confident, fluent, detailed response even when the factually correct answer is “I don’t know.” The model is not lying — it does not have a concept of lying. It is completing a pattern in the way that patterns like this tend to be completed, and sometimes the most statistically plausible completion happens to be wrong. You cannot eliminate hallucination through better prompting alone. You can reduce it significantly – retrieval-augmented generation, which we will build together in a later post, is the main tool for this – but “does this response contain made-up information?” is a question you will be asking about every AI feature you ship, indefinitely.

You can find more info about his topic on Simon’s blog.

Fine-tuning vs prompting.

When the model is not behaving the way you want, a developer’s instinct is to fix the source. Fine-tuning feels like fixing the source meaning you are modifying the model itself. Most of the time it is the wrong tool. Fine-tuning is slow, expensive, and requires labeled training data you probably do not have. It is genuinely useful for teaching a model a very specific style or domain it was never trained on. But “the model keeps adding preamble I did not ask for” or “I need it to respond in a specific JSON format” – those are prompting problems. Prompting is fast, cheap, reversible, and more powerful than most people expect when done properly. The rule of thumb I use: prompt first, always. Only reach for fine-tuning when you have exhausted what prompting can do.

Embeddings

An embedding is a way to turn text (or images, or audio) into a list of numbers called a vector. This vector captures the meaning of the content, not just the words. Two sentences that say similar things will produce similar vectors, even if they use completely different words.

How they work?

When you send text to an embedding model, it returns a fixed-length list of numbers (for example, 1,024 numbers). Each number represents some aspects of the meaning. You can then compare two vectors using math (typically cosine similarity or dot product) to determine how similar their meanings are. A search for “how to reset my password” would match “steps to change your login credentials” because the vectors are close, even though no words overlap.

Where are they stored?

Embeddings are stored in vector databases, which are specialized systems designed for similarity search. Common options include Amazon OpenSearch Serverless, Pinecone, and pgvector (a PostgreSQL extension). These databases index the vectors so that searches are fast even across millions of entries.

Embeddings are a key building block for RAG pipelines, semantic search, recommendation systems, and any application where you need to match meaning rather than exact keywords. Embeddings act as the “bridge” between unstructured content and intelligent retrieval, allowing GenAI applications to understand, match, and reason about information with far higher accuracy than keyword‑based search.

The part that changes what “done” means

All of those concepts above are things you learn once and that is it. You can also learn them naturally by the nature of the work itself. But this next one is different. It does not just add something to your toolkit, it changes how you think about your job.

In traditional software development, done has a clear definition. The tests pass. The spec is met. The edge cases are handled. You can write an assertion that says the output should equal some expected value, run it a thousand times, and trust the result. That is not how AI systems work.

You cannot assert that the model’s response will equal a specific string. You cannot even assert that it will contain specific words. What you can assert is that the response is helpful, accurate, appropriately uncertain, and safe for your particular use case.

That kind of quality measurement has a name in AI engineering: evals (evaluations). They are to AI engineers what unit tests are to software engineers, except they are harder to write, harder to automate, and require you to think about correctness in an entirely different way. Some evals are automated – you can check whether a response contains certain information, or whether it stays within a certain topic. Others require human judgment. Is this response actually helpful? Does it answer the question the user was asking, or the question they literally typed? Would I be comfortable if my manager saw this answer?

This is the shift that I think catches experienced developers most off guard, because it is not a new skill to acquire – it is a new way of thinking about a skill you already have. You already think about quality. You already write tests. But the question changes from “did it return the right value” to “was this good enough.” That ambiguity is uncomfortable but it is also unavoidable. The developers who get comfortable with it quickly are the ones who are going to own AI features at their companies.

What you already have

None of the above makes your existing skills irrelevant. If anything, it makes them more valuable in a specific way.

AI engineering right now has a lot of people who understand the models and very few people who know how to ship things. People who can explain how a transformer works but have never thought about what happens when their service goes down at 2am. People who can build a beautiful demo that impresses a product manager but have never had to defend an architectural decision to a team that has to maintain it for three years.

One of the most important things you get after some experience as a software developer is production thinking. You know the difference between code that works and code that holds. You know how to isolate a problem when something breaks in a way you did not expect. You know how to design a system that fails gracefully rather than catastrophically.

Retrieval-augmented generation (RAG), which we will cover in detail, is fundamentally a data pipeline with a language model at the end.

Agents, which we will also cover later, are AI systems that take actions, call tools, and reason across multiple steps. They are fundamentally state machines with external API calls. You have built both of these shapes before. The insides are new. The shapes are familiar.

The companies hiring right now are not looking for AI researchers. They are looking for software engineers who can own an AI feature end to end – who understand the concepts, can make architectural decisions, can measure quality, and can be accountable when something goes wrong. That is a description of a good senior developer who has spent a few months learning new vocabulary.

What comes next

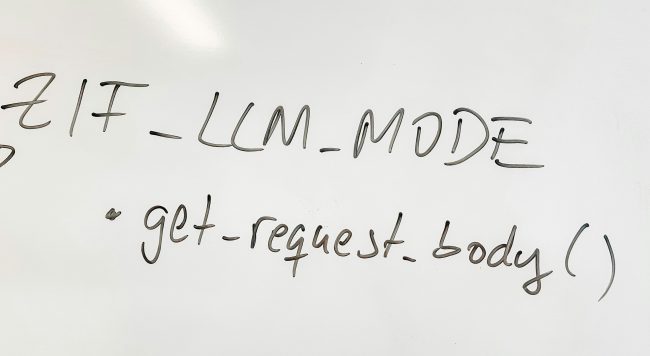

The rest of this series is built around building things. I have been working on a RAG system in C# – a chatbot that answers questions based on a set of documents – and I will walk through how I built it, what broke, and what I learned. After that, an MCP server in C#, which is how you give a language model the ability to call your own code as a tool. These are the two most practical patterns in AI engineering right now, and they are both very buildable for someone with a .NET background.

But before the code, you needed the vocabulary. Now you have it.

—

progress4success.com – Cosmin is a tech lead writing about staying relevant in the AI era.